Shattering Illusions: Debunking the 15 Biggest Myths About Data Quality in 2024

Unveiling the misconceptions surrounding data quality and how they impact businesses in Los Angeles and beyond.

The Quantity-Quality Conundrum: Why More Data Doesn’t Guarantee Better Insights

In the era of big data, it’s tempting to believe that more data equates to more accurate insights. However, this is one of the most pervasive Data Quality Myths Debunked. The truth lies in the relevance and quality of the data rather than its sheer volume. Inaccurate or irrelevant data can lead to misguided decisions, no matter how much of it you have.

According to a recent study by McKinsey Consulting, companies that focus on insight-driven strategies report above-market growth. This highlights the importance of quality over quantity in Data-Driven Decision Making Los Angeles. It’s not about having the most data; it’s about having the right data.

Businesses must strive for a balance where they collect sufficient data to inform their decisions while ensuring its accuracy and applicability. This means implementing robust Data Quality Management LA practices to filter out the noise and enhance the signal.

Beyond IT: The Cross-Functional Nature of Data Quality Management LA

There’s a common misconception that data quality is a concern exclusive to the IT department. However, data quality is a cross-functional discipline that impacts every aspect of an organization. Sales, marketing, customer service, and even human resources all rely on accurate data to perform effectively.

As such, Data Quality Management LA requires a collaborative effort. It’s not just about the technology used to collect and store data, but also about the processes and policies that govern its use throughout the organization.

By fostering a culture where data quality is everyone’s responsibility, businesses can ensure that their data is reliable, leading to better outcomes and a competitive edge in the market.

Beyond Technology: Organizational and Procedural Roots of Data Quality Issues

While technology plays a crucial role in managing data quality, it’s not the sole factor. Organizational and procedural issues often lie at the heart of data quality problems. Without proper data governance and management practices in place, even the most advanced technologies will fall short.

Effective data quality management involves a holistic approach that includes setting clear standards, establishing data ownership, and creating a culture of continuous improvement. This ensures that data quality is maintained at every level of the organization.

By addressing the root causes of data quality issues, organizations can build a strong foundation for their Data Governance Strategies 2024, positioning themselves for success in an increasingly data-driven world.

The Human Element: Why Automated Tools Aren’t a Panacea for Data Quality

Automation has revolutionized the way we manage data, but it’s not a cure-all. The human element remains crucial in the realm of data quality. Automated tools can help identify and rectify issues, but they lack the nuanced understanding that human oversight provides.

Humans can interpret context, understand the implications of data issues, and provide the critical thinking necessary to resolve complex problems. This is why a balanced approach that leverages both technology and human expertise is essential for Improving Data Accuracy.

Incorporating the human element into data quality management ensures that the nuances and complexities of data are fully understood and addressed, leading to more reliable and actionable insights.

The Myth of Permanence: Understanding Data Decay and Continuous Quality Management

Data is not static; it’s subject to decay over time. This means that even if data is clean and accurate today, it may not be tomorrow. The myth of data permanence is a dangerous one, as it can lead to complacency in data management practices.

Continuous quality management is the antidote to data decay. By regularly reviewing and updating data, organizations can maintain its accuracy and relevance. This ongoing effort is crucial for businesses that rely on data to inform their strategies and decisions.

Recognizing the impermanent nature of data is the first step in establishing a proactive approach to data quality management, ensuring that data remains a valuable asset for the organization.

The Cost Illusion: Investing in Data Quality vs. The High Price of Inaccuracy

Some organizations view investments in data quality as an unnecessary expense. However, the cost of poor data quality can far exceed the investment required to maintain high standards. Inaccurate data can lead to wasted resources, missed opportunities, and damaged reputations.

An investment in data quality is an investment in the future of the business. By ensuring that data is accurate and reliable, organizations can make informed decisions, optimize operations, and stay ahead of the competition.

The real cost is not in the investment in data quality, but in the consequences of neglecting it. Organizations that recognize this will be better positioned to thrive in the data-driven landscape of 2024 and beyond.

The Ripple Effect of Small Errors: Improving Data Accuracy for Big Impact

Even small errors in data can have a significant impact on an organization. These minor inaccuracies can compound over time, leading to major issues down the line. It’s the ripple effect of data inaccuracy, and it can be detrimental to a business’s success.

By focusing on Improving Data Accuracy from the outset, organizations can prevent these ripples from turning into waves. It’s about being meticulous in data entry, validation, and maintenance to ensure that even the smallest details are correct.

This attention to detail may seem tedious, but it’s essential for maintaining the integrity of data and the decisions that are based on it. The effort put into improving data accuracy pays off in the form of reliable insights and a robust foundation for decision-making.

Compliance vs. Excellence: Why Data Governance Strategies 2024 Go Beyond Regulations

Compliance with regulations is often seen as the primary goal of data governance. However, leading organizations understand that compliance is just the starting point. Excellence in data governance goes beyond meeting regulatory requirements and focuses on maximizing the value of data.

Data Governance Strategies 2024 must be designed to not only ensure compliance but also to foster innovation, efficiency, and competitive advantage. This proactive approach to governance makes data a strategic asset rather than a mere compliance checkbox.

Organizations that aim for excellence in data governance will be the ones that truly capitalize on their data, leveraging it to drive growth and success in an increasingly competitive landscape.

Perfect Data: A Mythical Necessity in Data-Driven Decision Making Los Angeles

The pursuit of perfect data is a myth that can hinder organizations from taking action. While data quality is critical, waiting for data to be flawless can result in missed opportunities and a lack of agility.

In the context of Data-Driven Decision Making Los Angeles, it’s important to recognize that perfect data is an ideal, not a requirement. Organizations must learn to operate with the best available data, making informed decisions while continuously working to improve data quality.

By embracing imperfection and focusing on continuous improvement, businesses can remain dynamic and responsive, turning data into a powerful tool for innovation and growth.

Data Quality as a Strategic Business Driver: Aligning Accuracy with Objectives

Data quality should not be seen as a mere technical issue; it’s a strategic business driver that can align with and support an organization’s objectives. High-quality data enables better decision-making, more efficient operations, and improved customer experiences.

Organizations that integrate data quality into their strategic planning are better equipped to achieve their goals. By aligning data accuracy with business objectives, they can ensure that their data supports their mission and drives success.

As we move into 2024, the organizations that view data quality as a strategic imperative will be the ones that set themselves apart in the marketplace.

The Unstructured Data Challenge: Addressing Quality Issues Beyond the Structured Realm

While much attention is given to structured data, unstructured data presents its own set of quality challenges. This includes data from sources such as social media, emails, and text documents, which are not easily categorized or analyzed.

Addressing quality issues in unstructured data requires advanced tools and techniques that can interpret and organize this information. It also requires a strategic approach that recognizes the value of unstructured data and integrates it into overall data quality management practices.

As businesses continue to grapple with vast amounts of unstructured data, those that develop effective strategies for managing its quality will gain a significant advantage in deriving insights and making informed decisions.

Executive Engagement: The Critical Role of Leadership in Data Quality Initiatives

Executive engagement is crucial for the success of data quality initiatives. Leadership must be actively involved in setting the vision, allocating resources, and championing data quality across the organization.

Leaders who understand the importance of data quality can drive change and foster a culture where data is valued and properly managed. Their support is essential in building the momentum needed to implement effective data quality strategies.

As data becomes an increasingly important asset, the role of executives in guiding data quality efforts will be more critical than ever. Their leadership can make the difference between a thriving data-driven organization and one that is left behind.

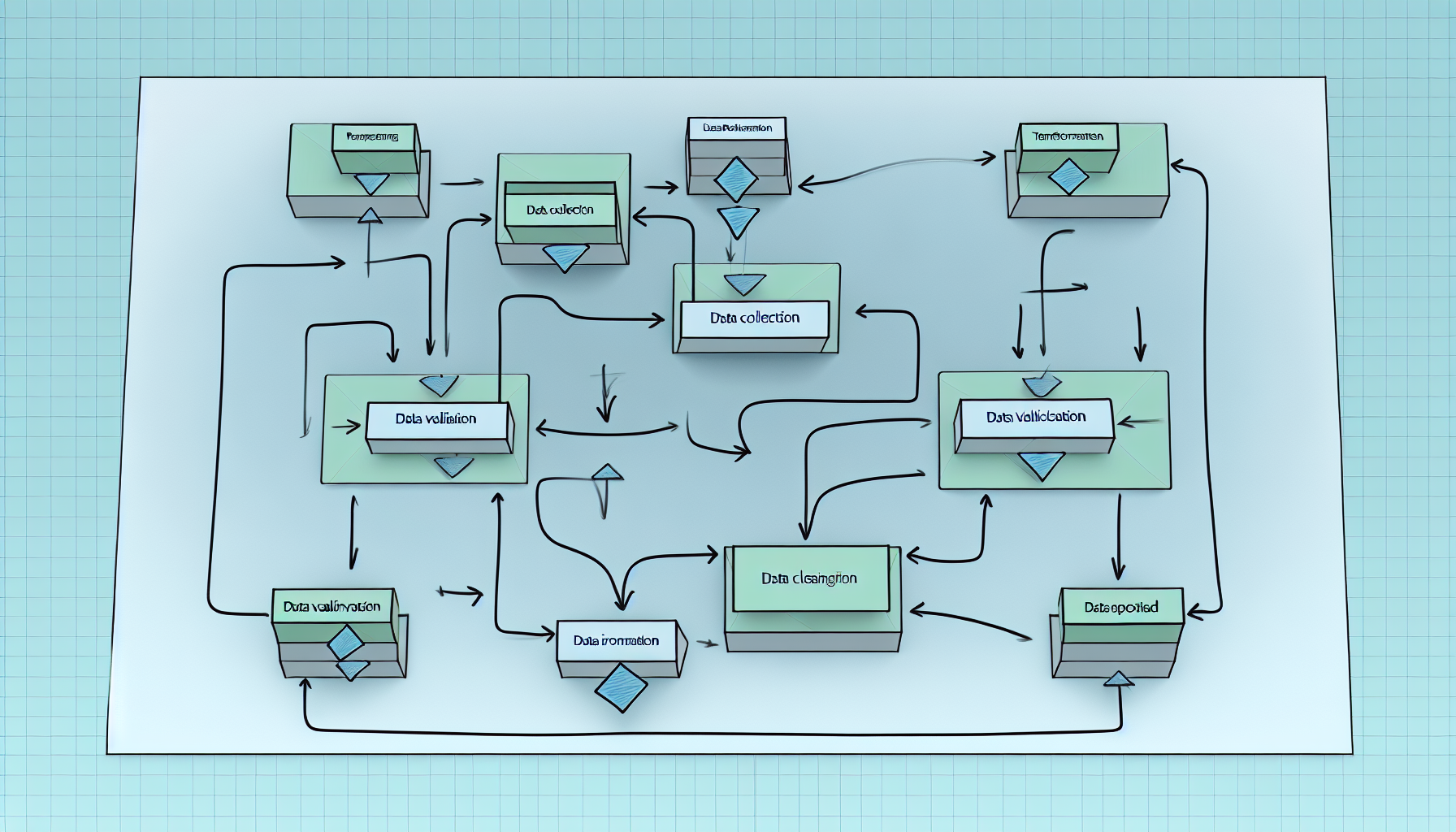

Comprehensive Data Quality: From Cleaning to Preventative Data Governance Strategies 2024

Data quality is not just about cleaning up data; it’s about implementing preventative measures that ensure data remains clean and useful. This shift from reactive to proactive data governance is a key trend for 2024.

Comprehensive data quality involves the entire lifecycle of data, from creation to retirement. By embedding quality controls at every stage, organizations can prevent issues before they arise and maintain a high standard of data integrity.

With preventative data governance strategies, businesses can minimize the need for data cleaning and focus on leveraging their high-quality data for strategic advantage.

Measuring Success: Establishing Clear Metrics for Data Quality Management LA

Measuring the success of data quality management efforts can be challenging, but it’s essential for continuous improvement. Establishing clear metrics allows organizations to track progress, identify areas for enhancement, and demonstrate the value of their data quality initiatives.

These metrics should be aligned with business objectives and reflect the specific needs and goals of the organization. By regularly reviewing these metrics, businesses can make informed adjustments to their data quality strategies and ensure they are driving the desired outcomes.

As data quality becomes an increasingly important focus for businesses, those that can effectively measure and manage their data quality will be well-positioned for success.

Technological Evolution and Data Quality: Why New Tech Demands Vigilant Governance

The rapid pace of technological evolution presents both opportunities and challenges for data quality. New technologies can enhance data management capabilities, but they also require vigilant governance to ensure that data quality standards are maintained.

As organizations adopt new technologies, they must also update their data governance frameworks to accommodate these changes. This includes considering the implications of artificial intelligence, machine learning, and other emerging technologies on data quality.

By staying ahead of technological trends and adapting governance strategies accordingly, organizations can ensure that their data quality remains robust in the face of constant change.